Hello m13

This looks great! having Makehuman integrated with Chordata would be a great contribution!

Please tell us a little more about this project.

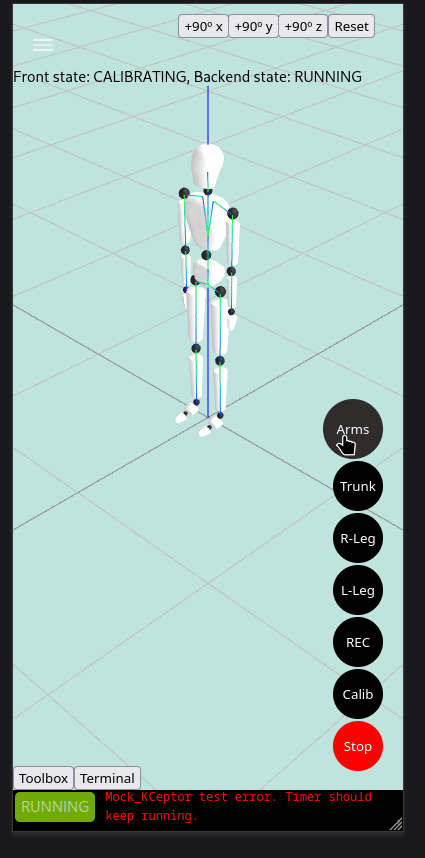

We are currently working in the complete version of the remote console which allows to manage and visualize the capture in real time in a webapp. The communication btw backend and frontend using COPP through Websockets is already implemented togheter with many other features. Perhaps you will like to take a look a it, to copy the parts of code that are useful to your project.

(of course, there's a lot of styling to be done, we are working in the implementation of the core features currently)

And who knows, perhaps in the future we can include your makehuman.js implementatio directly into the remote console 😉